A Technical Review of Tencent's Hunyuan AI: Unlocking Cinematic Potential

The landscape of visual synthesis is shifting rapidly. Just when creators thought they understood the limits of current technology, a new contender emerged from Shenzhen. Developed by Tencent, Hunyuan AI represents a significant leap forward in the realm of open-source video synthesis, challenging established norms with its parameter-rich architecture.

For creative professionals and developers alike, the release of this 13-billion-parameter model is not just another headline—it is a functional expansion of what is possible in digital storytelling. This analysis aims to dissect the technical capabilities of the Hunyuan architecture, explore its practical applications, and help you determine if this tool fits into your production pipeline.

The Architecture Behind the Motion

At its core, this system utilizes a sophisticated transformer-based structure. Unlike earlier iterations of synthesis tech that relied heavily on diffusion alone, Tencent's approach integrates a multimodal Large Language Model (LLM) specifically for handling prompt instructions.

This "two-stream" design allows the system to process spatial and temporal data simultaneously. The result? A remarkable adherence to physics and lighting consistency. The model employs a 3D Variational Autoencoder (VAE), which compresses video data into a latent space, allowing for high-fidelity decoding at 720p resolution (upscalable to higher formats).

✨ Prompt Engineering Strategies for Dynamic Scenes

One of the most distinct features of this system is its semantic understanding. It does not require "robot-speak" to function. However, to truly unlock cinematic outputs, specific phrasing techniques are required.

Below are comparative examples of prompt engineering. Note that we are avoiding the generic "café" examples to focus on more complex texture and lighting scenarios.

Scenario A: High-Speed Action

Weak Prompt: "A car driving fast at night."

Optimized Prompt: "Cinematic tracking shot, Cyberpunk vehicle speeding through a neon-lit Tokyo highway, wet pavement reflections, motion blur, volumetric fog, 8k resolution, photorealistic render."

Scenario B: Macro Nature Detail

Weak Prompt: "A flower opening up."

Optimized Prompt: "Time-lapse macro photography, dew drop rolling off a vibrant purple orchid petal, soft bokeh background, natural morning sunlight, 60fps smooth interpolation."

💡 Practical Implementation & Hardware Realities

While the software is open-source, deployment is resource-intensive. Running Hunyuan AI locally requires substantial computational overhead. The 13B parameter size means that consumer-grade GPUs often struggle to maintain reasonable inference speeds.

System Requirements Breakdown

| Component | Minimum Requirement | Recommended for Smooth Workflow |

|---|---|---|

| VRAM | 24GB (Slow Inference) | 45GB+ (Optimal Performance) |

| System RAM | 64GB | 128GB |

| Storage | 100GB SSD | 500GB NVMe SSD |

| OS | Linux (Ubuntu 20.04+) | Linux (Ubuntu 22.04+) |

📌 Best Practices for Local Setup:

If you possess the hardware, follow this sequence:

1. Clone the repository from GitHub.

2. Install Python dependencies via Conda.

3. Download the pre-trained weights (ensure you have stable internet, as files are massive).

Performance Assessment Matrix

Instead of a binary "good or bad" verdict, we evaluate the model based on a weighted performance matrix tailored for professional editors.

- Motion Fluidity: ⭐⭐⭐⭐ (4.5/5) – Exceptional handling of temporal consistency.

- Prompt Adherence: ⭐⭐⭐⭐ (4/5) – Understands complex spatial relationships well.

- Resource Efficiency: ⭐⭐ (2/5) – Extremely heavy on local hardware.

- Audio Synthesis: N/A – Current version is strictly visual; external audio tools are required.

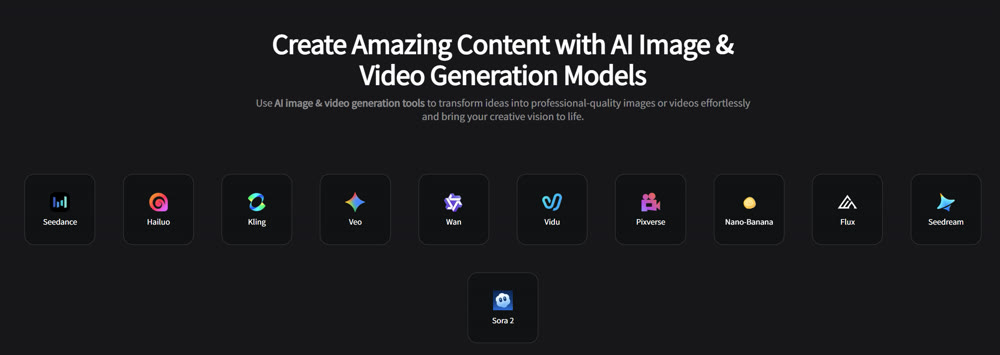

Streamlining Your Creative Workflow with Genmi AI

While running high-end models locally offers control, the hardware barrier is prohibitive for many creators. This is where cloud-based orchestration platforms become essential. Genmi AI serves as a premier hub for accessing top-tier synthesis technologies without the need for a $10,000 workstation.

Genmi AI provides a unified interface that aggregates the world's most powerful engines. Whether you are looking to start your creative journey or dive deep into specific model architectures, the platform handles the heavy lifting on the backend.

Advanced Model Access

For users who appreciate the fidelity of diverse architectures, Genmi offers direct integration with leading systems. You can experiment with the impressive capabilities of Hailuo for narrative consistency, or explore the distinct artistic styles offered by Flux. Furthermore, the platform supports the highly acclaimed Wan model, giving you a broad palette of visual styles to choose from.

Tools for Every Stage of Production

Beyond raw model access, the workflow is enhanced by specialized utility tools. You can seamlessly transform text into video for rapid storyboarding, or utilize image-to-image functions to modify existing assets while preserving their composition. To add the final polish, the suite of AI effects allows for post-production enhancements that would typically take hours in traditional compositing software.

Conclusion

Tencent's contribution to the open-source community is undeniable. It bridges the gap between commercial-grade visual effects and accessible technology. The model excels in maintaining visual fidelity and understanding complex natural language, making it a powerful asset for filmmakers and digital artists.

However, the steep hardware requirements act as a gatekeeper. For those with the infrastructure, it offers limitless potential. For everyone else, leveraging cloud ecosystems provides the same high-quality output without the technical overhead.

Take Control of Your Narrative

The future of storytelling is not about replacing human creativity, but amplifying it with the right tools. Don't let hardware limitations stifle your vision. Experience the power of advanced synthesis engines and elevate your production quality today at Genmi AI.

---⚔️ Tech Showdown: Google or OpenAI? Read our Sora 2 vs Veo 3 comparison to see who wins.

Recommended Articles

How to Use Runway Gen 2 for Professional-Grade Video

A complete guide on how to use Runway Gen 2 for professional videos. Master text-to-video, camera controls, motion brush, and expert tips.

PixVerse AI Video Generator Review: An Honest Look at Its Quality in 2025

Is PixVerse the right AI video tool for you? Our in-depth 2025 review tests its features, quality, and speed to help you decide if it's worth the hype.

Best AI Video Generation Models: A 2025 Professional Comparison

Explore our in-depth comparison of the best AI video generation models like Runway, Pika, and Sora. Find the perfect tool for your creative or business needs.